HO-Flow: Generalizable Hand-Object Interaction Generation with Latent Flow Matching

|

|

|

|

|

|

|

|

|

|

Abstract

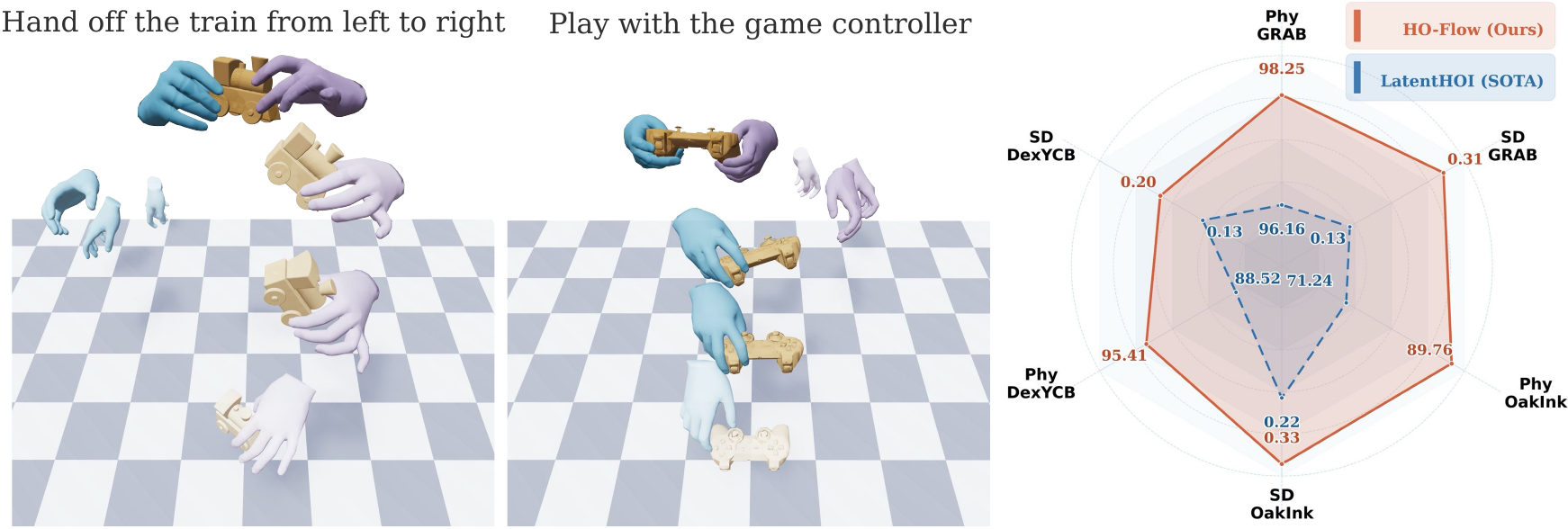

Generating realistic 3D hand-object interactions is a fundamental challenge in computer vision and robotics, requiring both temporal coherence and high-fidelity physical plausibility. Existing methods remain limited in their ability to learn expressive motion representations for generation and perform temporal reasoning. In this paper, we present HO-Flow, a framework for synthesizing realistic hand-object motion sequences from texts. HO-Flow first employs an interaction-aware variational autoencoder to encode sequences of hand and object motions into a unified latent manifold by incorporating hand and object kinematics, enabling the representation to capture rich interaction dynamics. It then leverages a masked flow matching model that combines auto-regressive temporal reasoning with continuous latent generation, improving temporal coherence. To further enhance generalization, HO-Flow predicts object motions relative to the initial frame, enabling effective pre-training on large-scale synthetic data. Experiments on the GRAB, OakInk, and DexYCB benchmarks demonstrate that HO-Flow achieves state-of-the-art performance in both physical plausibility and motion diversity for hand-object interaction synthesis.

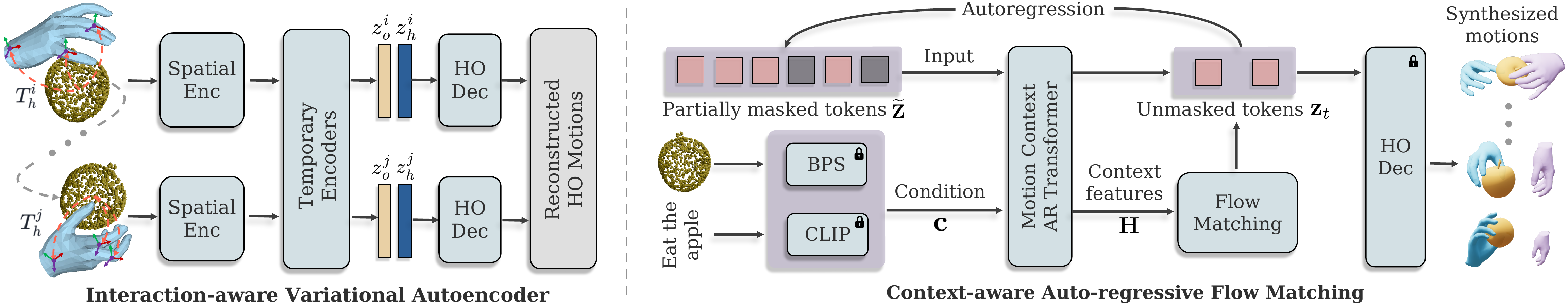

Method Overview

Our approach. HO-Flow combines two components for text-driven hand-object interaction generation. First, an interaction-aware variational autoencoder (Inter-VAE) encodes hand and object motion sequences into structured latent tokens that capture both global trajectories and fine-grained contact-rich coordination through hand-centric geometric cues. Second, a context-aware masked auto-regressive flow matching model conditions on object geometry and language, aggregates temporal context, and predicts motion latents progressively in continuous space, leading to more coherent and physically plausible interaction synthesis.

Video Demos

Browse the cropped HO-Flow demo videos for OakInk and GRAB objects. Each card shows the generated interaction clip together with its task description.

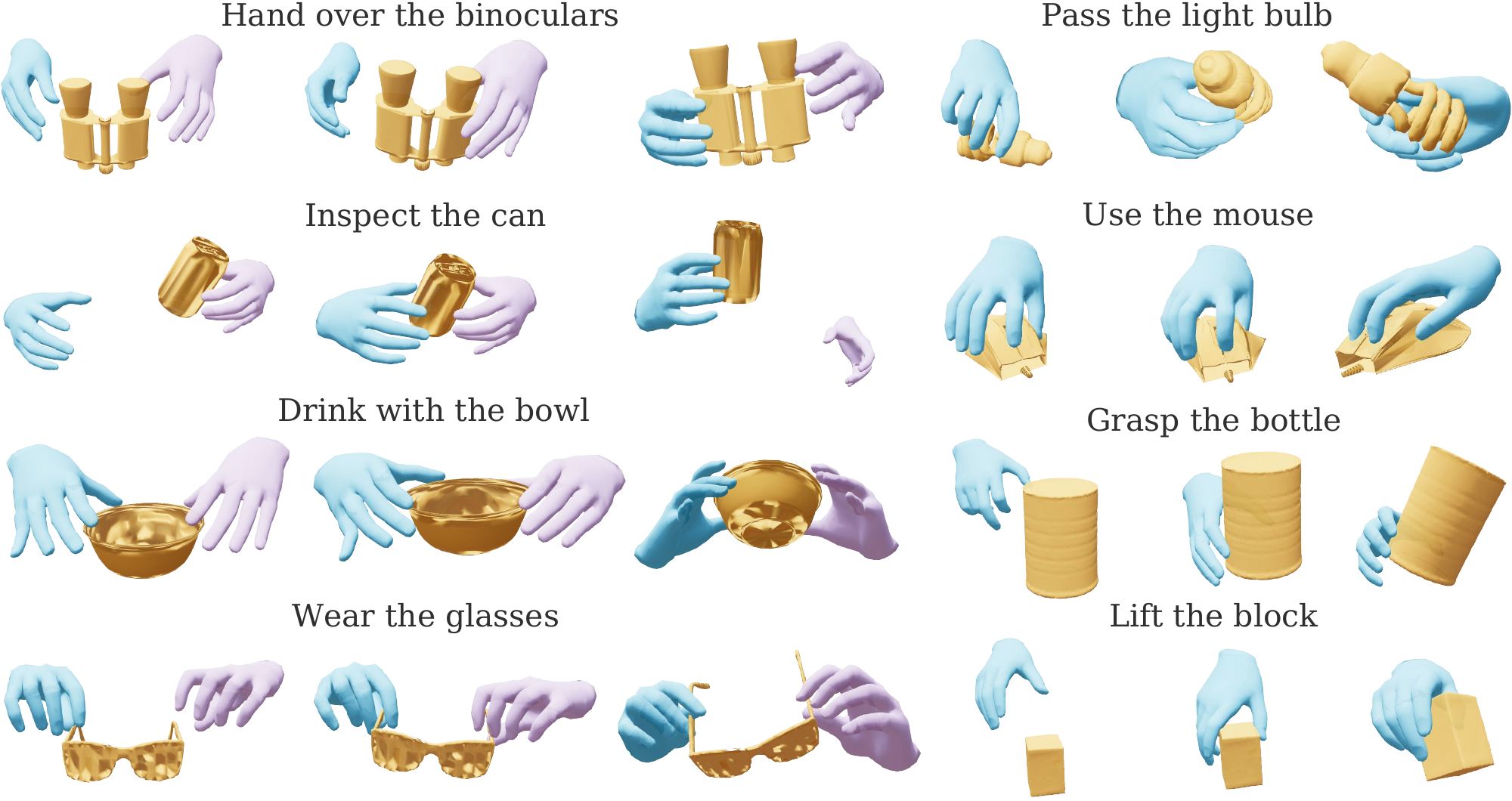

Qualitative Results

Generalization across benchmarks. HO-Flow synthesizes realistic hand and object motions on both bi-manual and single-hand settings, covering challenging evaluation splits on OakInk and DexYCB. The generated sequences preserve natural contacts while remaining diverse across different objects and task descriptions.

BibTeX

@article{chen2026hoflow,

author = {Chen, Zerui and Potamias, Rolandos Alexandros and Chen, Shizhe and Deng, Jiankang and Schmid, Cordelia and Zafeiriou, Stefanos},

title = {{HO-Flow}: Generalizable Hand-Object Interaction Generation with Latent Flow Matching},

booktitle = {arXiv:2604.10836},

year = {2026},

}

Copyright

The documents contained in these directories are included by the contributing authors as a means to ensure timely dissemination of scholarly and technical work on a non-commercial basis. Copyright and all rights therein are maintained by the authors or by other copyright holders, notwithstanding that they have offered their works here electronically. It is understood that all persons copying this information will adhere to the terms and constraints invoked by each author's copyright.